Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

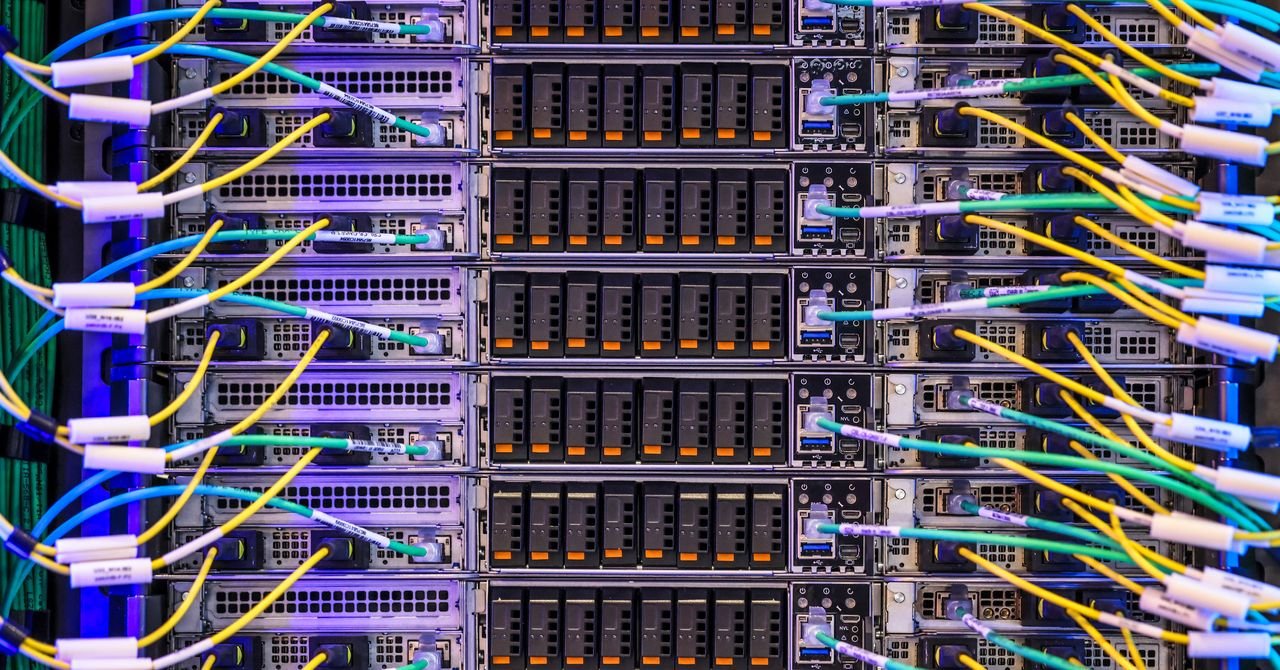

Creating AI in a sustainable manner it seems like a pipe dream as tech giants that previously promised emissions reductions have been racing to make bigger ones. data centers powered by fossil fuels.

The rush to build AI for any purpose has been fueled by the Trump administration, too take back the protection of the environment.

Despite this storm, Sasha Luccioni, an AI researcher, thinks that the need for transparency in AI, from businesses and individuals, is higher than ever from the customer’s point of view.

Luccioni has been a leader in trying to create better visuals about AI output and environmental impact in his four years at Hugging Face, an AI company, including pioneering a board board Documenting the power of open source AI models. He has also been a vocal critic of the big AI companies who, he says, are deliberately hiding information about the power and stability of humans.

Now, he’s starting Sustainable AI Group, a new venture with former Salesforce Sustainability chief Boris Gamazaychikov. They will focus on helping companies answer, among other things, “What are the levers that we can play with to get sponsors to slow down a bit?” Luccioni is also interested in harnessing the power of different types of AI tools, such as text-to-speech, or image-to-video – an area he says is understudied.

Luccioni sat down with WIRED to talk about the importance of sustainable AI and what he wants to see from Big Tech.

These interviews have been edited for length and clarity.

WIRED: I hear a lot from people who are concerned about the environment and the use of AI, but I don’t hear much from companies who are thinking about it. What have you heard specifically from people who are working with AI in their business, and what are they worried about?

Sasha Luccioni: First, they’re getting a lot of pressure from the staff—and pressure from the team, pressure from the director, like, “You’ve got to count this.” Their employees are like, “You’re forcing us to use Copilot – how does that affect our ESG goals?”

For many companies, AI has become a major part of their business. In that case, he should understand the risks. They need to understand where the models are going. They cannot continue to use models that do not even know the location of the data center or the grid they are connected to. They have to know what kind of emissions, traffic emissions, all these different things.

It doesn’t mean you shouldn’t use AI. I think we are past that. It is choosing the right models, for example, or sending a signal that the energy source is needed, so customers are willing to pay less for data centers that are supported by more energy. There are ways to do this, and it’s a matter of finding believers in the right places.

I would also imagine that the international, standard-setting industry is very different from the US, right? The US government won’t talk about it, but other governments do.

In Europe, they have I HAVE Act. Sustainability has been a huge part of this since the beginning. They put a bunch of sentences in there, and now the first reports are coming out.

Even Asia is trying to be transparent. The International Energy Agency has been doing these reports (on AI and energy use). I was talking to them, and they were like, some countries realize that the IEA gets their numbers from countries, and countries don’t have these numbers for data centers. They can’t make future decisions, because they need numbers to know “Okay, so we need X, in the next five years” or whatever. (Other countries) are starting to push back on data center builders.